Intelligent deep learning for human activity recognition in individuals with disabilities using sensor based IoT and edge cloud continuum

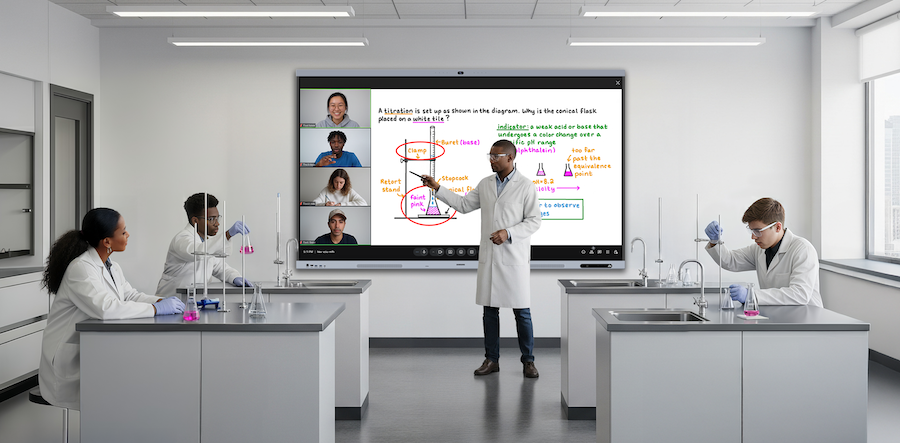

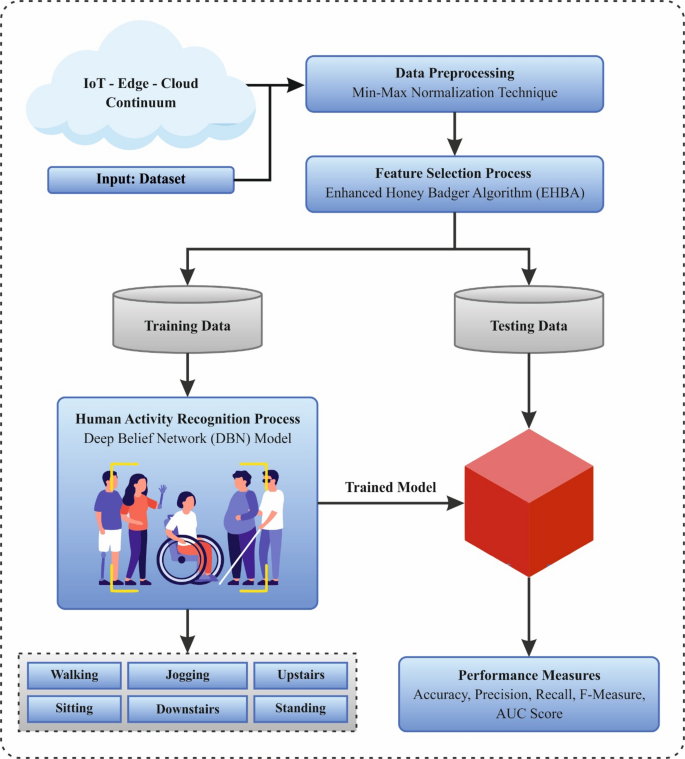

In this manuscript, an IDLTHAR-PDST technique is presented. The purpose of the IDLTHAR-PDST technique is to efficiently recognize and interpret activities by leveraging sensor technology within a smart IoT-Edge-Cloud continuum. To accomplish that, the IDLTHAR-PDST approach comprises step-by-step processes namely data preprocessing, EHBA-based feature selection, and DBN-based classification processes are represented in Fig. 2.

Overall process of IDLTHAR-PDST approach.

Data preprocessing

Firstly, the IDLTHAR-PDST technique utilizes min-max normalization-based data pre-processing model to optimize sensor data consistency and enhance model performance28. This model is chosen in this model because it scales the data to a fixed range, usually between 0 and 1, which assists in standardizing input features. This is specifically crucial in DL models, where features with diverse magnitudes can negatively impact the learning process, causing the model to favor features with larger values. By normalizing the data, the model can learn more efficiently and converge faster. Min-max normalization is also computationally effectual and preserves the relationships between the data points, making it ideal for sensor data in HAR. Furthermore, this technique works well with the IoT-Edge-Cloud continuum, where real-time data from diverse sensors is processed.

Data pre-processing is important for enhancing the model’s precision and minimizing computational complexity. Initially, irrelevant and redundant features are extracted from the datasets. At last, Min–Max Normalization has been used for scaling the values of the feature among \((0\),1), following the Eq. (1):

$${x}^{{\prime }}=\frac{x-\text{min}\left(x\right)}{\text{max}\left(x\right)-\text{min}\left(x\right)}$$

(1)

whereas \(x\) refers to a new value, and\(\text{m}\text{a}\text{x} \left(x\right) \text{a}\text{n}\text{d} \text{m}\text{i}\text{n} \left(x\right)\) represent maximum and minimum feature values, correspondingly. This conversion improves model performance by normalizing the data.

EHBA-based feature selection

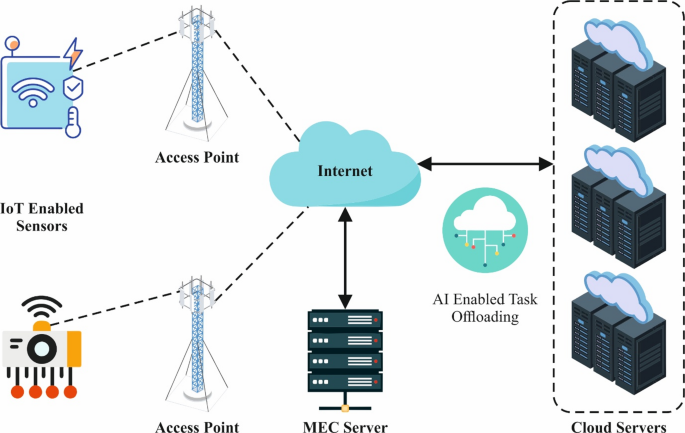

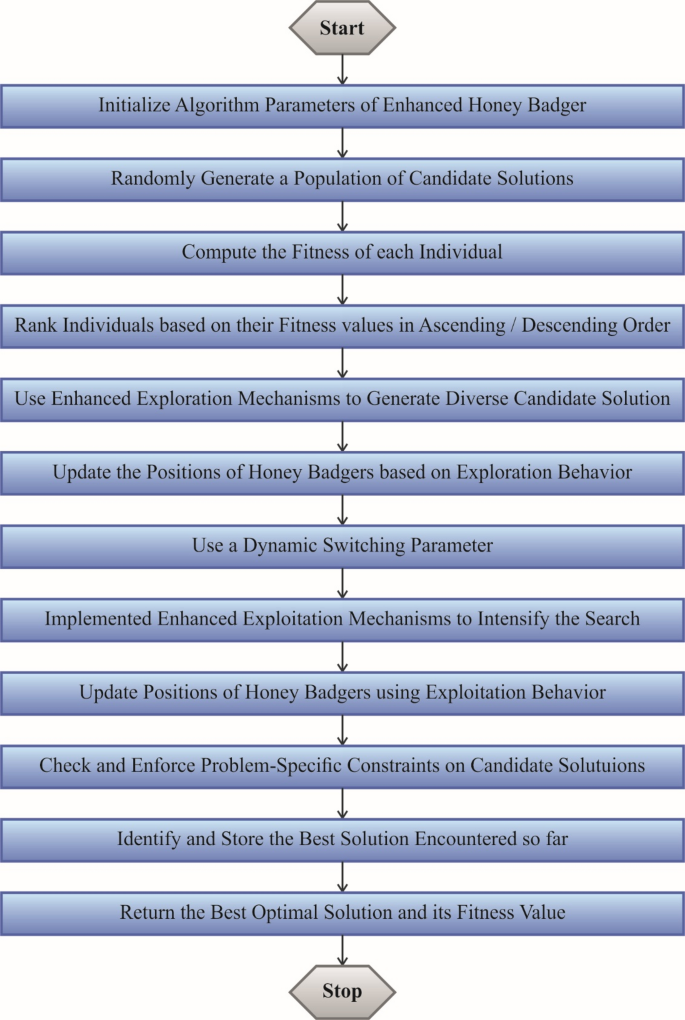

For feature subset selection, the EHBA is used to effectively reduce dimensionality while retaining critical activity-related features29. This technique is chosen due to its robustness and effectiveness in handling complex, high-dimensional datasets. EHBA replicates the aggressive foraging behavior of honey badgers, allowing it to explore the feature space effectively and choose the most relevant features while avoiding overfitting. This is specifically valuable in HAR, where the data is often noisy and contains redundant or irrelevant features. Compared to other techniques, EHBA presents a balance between exploration and exploitation, ensuring optimal feature subsets with improved classification performance. Furthermore, the ability of the EHBA model to handle dynamic, real-time data from IoT sensors makes it appropriate for HAR applications in smart environments. Figure 3 illustrates the steps involved in the EHBA model.

Steps involved in the EHBA method.

EHBA selects the top features from the removed features. The process of feature selection intends to classify the IDS using higher precision however reducing dimensionalities, overfitting, and complexities problems. Stimulated by the searching behavior of honey badgers, it outshines in addressing optimizer problems including complicated search spaces. The Levy flight model has been used in this method for assigning the population to improve the ability to explore and utilize EHBA. In this work, the outcomes of EHBA combine the Levy flying method using the HBA. Harmony and Quick convergence through exploration and production are other dual benefits. The process of EHBA is defined and explained below.

Initialization

This Levy Flight model is used to configure the constraints of EHBA, such as the maximum feature size, iteration number, and size of archive (N), and to select the finest features. Equation (2) has been applied to choose the features depending on the upper and lower limits of population size (KO):

$$a{d}_{i}=lr{b}_{i}+rando{m}_{1}\times \left(ur{b}_{i}-lr{b}_{i}\right)$$

(2)

Where random1 refers to randomly generated number among (1,\(0)\); \(ad\) signifies input data illustrated in the \({i}^{th}\) iteration; \(ur{b}_{i}\) and \(lr{b}_{i}\) describes the search areas of the higher and lower bounds. The improved HBA method uses the LF model based on the empty archive \({K}_{f}\). During these time steps is represented by \(f\), and when \(f=0\), the initial populations were generated, and formulated in the succeeding Eq. (3).

$$\overline{{K}_{f}}={a}_{i}+\beta \oplus L\left(\delta \right)$$

(3)

Now, the entry-to-entry multiplication operations are indicated by the symbol \(\oplus\), the distribution parameter of the levy flight’s \(L\left(\delta \right)\) has been signified by \(\delta ,\) and the arbitrary step size is symbolized as \(\beta\). Then, with the\(ith\) iteration, the features are selected based on the EHBA’s initial location.

Random generation

To discover the optimum response, the intensity searching habit arbitrarily makes the density factor of EHBA.

Objective function

After selecting the optimum features, the fitness function (FF) represents the EHBA’s factor of density. The upgraded tactic is then utilized with the HBA approach to recognize the optimal features using smaller iterations and effective classification precision. To improve the precision of the classification, dual FFs are used: the first one (FF1) has been applied to minimize the rate of error. Conversely, another function (FF2) was employed to select proper features and its Eqs. (4)–(5):

$$min\left(F{F}_{1}\right)=\left(\frac{1}{mi}{\sum }_{f=1}^{mi}\frac{M{I}_{error}}{M{I}_{AI}}\right)\times 100$$

(4)

In such cases\({M}_{error}\) refers to the wrongly predicted features, and \({M}_{AI}\) stands for instance counts in the dataset. Formerly, the next FF is stated under.

$$min\left(F{F}_{2}\right)=\left({\sum }_{i=1}^{F}a{d}_{i}\right)\times 100$$

(5)

Now, \(F\) signifies the primary features generated by the density factor.

Updated the density factor of EHBA to choose the best features

The ideal features are selected with the density factor of EHBA. A unified transformation from exploration to exploitation has been guaranteed by using the density module\(\alpha\). Equation (6) has been applied to slowly minimize randomness and regulate the factor of density that reduces the number of repetitions.

$$\overline{{K_{{f + 1}} }} = ad_{{prey}} + Dr \times \alpha \times ad_{{prey}} + Dr \times random_{3} \times \beta \times hb_{i} \times \left[ {\cos \left( {2\pi \cdot random_{4} } \right)} \right] \times \left[ {1 – \cos \left( {2\pi \cdot random_{4} } \right)} \right]$$

(6)

Equation (12) should be substituted as Eq. (7) when the randomly generated value is lower than 0.5:

$$\overline{{K}_{f+1}}=a{d}_{prey}+Dr\times rando{m}_{7}\times \alpha \times h{b}_{i}$$

(7)

Whereas, the best position was signified as \(a{d}_{prey}\); the ability of honey badger for foraging is noticeable as \(\alpha\); the distance between\(i th\) honey badger and prey were stated as \(h{b}_{i}\); random 3, 4, 5, and 7 are designated the randomly generated numbers among (1, \(0\)) and \(Dr\) represents the search direction. Equations (8)–(9) are applied to select the top features and Eqs. (6) and (7), to enhance the rate of error:

$$Dr=\left\{\begin{array}{l}1 if rando{m}_{6}\le 0.5\\ -1 otherwise\end{array}\right.$$

(8)

$$\beta =G\times \text{exp}\left(-\frac{f}{{f}_{maximum}}\right)$$

(9)

In such cases, the parameter \(G\) contains a normal value of 2 and is fixed as a continuous using the state \(G\ge 1\); randomly generated numbers among 1 and \(0\) is signified as random 6 and \({f}_{maximum}\) describes the maximal iteration counts. Since the population, \({K}_{f+1}\), the best features are selected with Eq. (8) to \(\left(9\right)\). Then, these generations take place and have been upgraded to the value of \(i=i+1\). Formerly, the FF was verified by applying this process.

End.

Over complexities decrease, the EHBA model chooses the top features from the recovered features in an optimum way. The model then replicates stages 4 over 3 till the termination conditions are fulfilled.

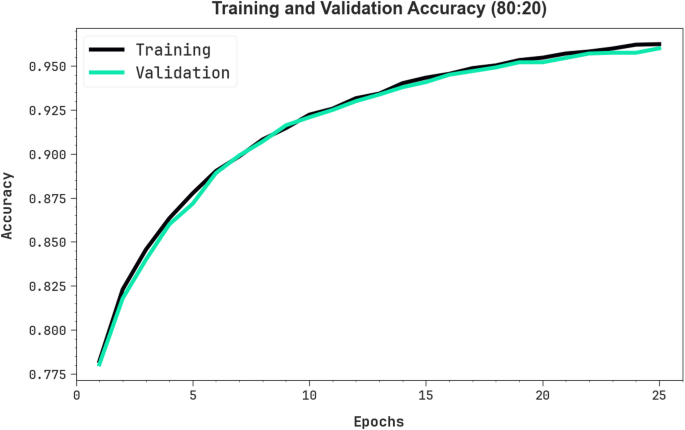

DBN-based classification process

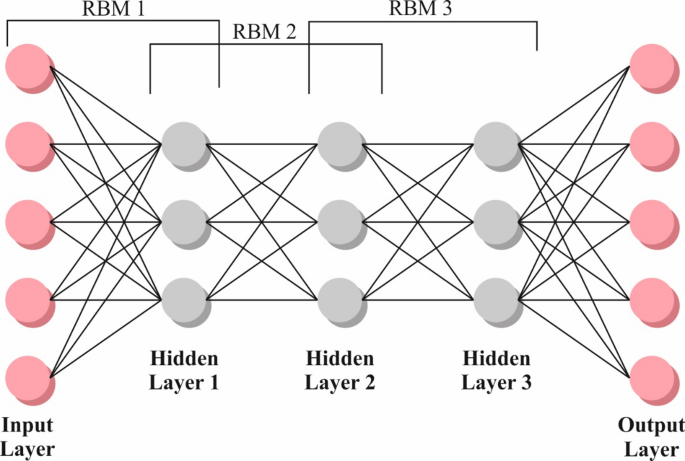

Finally, the DBN model is employed for recognition of human activities30. This technique is chosen for its capability to model complex hierarchical features from large, high-dimensional datasets. DBNs comprises of multiple layers of Restricted Boltzmann Machines (RBMs) that can efficiently learn feature representations without extensive feature engineering, making them ideal for HAR tasks. DBNs outperforms in capturing the complex patterns and dependencies in sensor data, which is often noisy and unstructured. Their deep architecture enables the learning of abstract features, improving classification accuracy compared to shallow models. Additionally, DBNs can efficiently handle the large volumes of data generated by IoT sensors in real-time, making them highly appropriate for smart IoT-edge-cloud systems in HAR applications. Figure 4 represents the architecture of DBN.

DBNs is an innovative NNs intended to enhance generalization and handle the flow of information in data. They concentrated on the estimation of the fundamental data distribution however reducing dependence on BMs, leading to more precise methods. DBNs use a multilayered training approach, integrating RBMs at every layer, which have been trained under observation. The backpropagation-based methods enhance the training procedure, fine-tuning weights to improve the performances and minimize overfitting. It is mainly proficient at removing higher-level features from data training, efficiently taking the relations among various class labels. This layered RBM training offered detailed data knowledge. It has shown its efficiency in different areas, like language processing and image recognition, owing to its ability to exhibit composite data distributions.

To determine the input and hidden layer’s standard distribution, the energy function is applied in below Eq. (10):

$$P\left(v, h\right)=\frac{{e}^{-E\left(v,h\right)}}{{\sum }_{v,h}{e}^{-E\left(v,h\right)}}$$

(10)

Now, \({E}_{(v,h)}\) specifies the RBM’s energy function and is calculated by the equation shown below:

$$E\left(v, h\right)=-\sum _{i=1}{a}_{i}{v}_{i}-\sum _{j=1}{b}_{j}{h}_{j}-\sum _{i,j}{v}_{i}{h}_{j}{w}_{ij}$$

(11)

Whereas, the weight between clear and hidden ones is symbolized by \({w}_{ij}\). The coefficient for the obvious and hidden nodes has been represented by \({b}_{j}\), and\({ a}_{i}.\)

In this work, the log-probability‐based gradient random fall model has been applied to provide efficient training to the data patterns. It is attained by choosing \(a,\) \(b\) features in the accurate manner that creates for the RBM. When the analysis offered an instance, they could rephrase the probability in the following way:

$$P\left(v\right)=\frac{{\sum }_{h}{e}^{-E\left(v,h\right)}}{{\sum }_{v,h}{e}^{-E\left(v,h\right)}}$$

(12)

For the development of the logarithm’s derivation, the random gradient is applied,

$${w}_{t+1}={w}_{t}+\vartheta \left[P\left(\left(h|v\right){v}^{T}\right)-P\left(\left(\widehat{h}|\widehat{v}\right){\widehat{v}}^{\text{T}}\right)\right]-\lambda {w}_{t}+\alpha \Delta {w}_{t-1}$$

(13)

$${a}_{t+1}={a}_{t}+\vartheta \left(v-\widehat{v}\right)+\alpha \Delta {a}_{t-1}$$

(14)

$${b}_{t+1}={b}_{t}+\vartheta \left(P\left(h|v\right)-P\left(\widehat{h}|\widehat{v}\right)\right)+\alpha \Delta {b}_{t-1} \left(15\right)$$

(15)

Now,

$$P\left({h}_{j}=1|v\right)=\sigma \left({\sum }_{i=1}^{m}{w}_{ij}{v}_{i}+{b}_{j}\right)$$

(16)

and

$$P\left({v}_{j}=1|h\right)=\sigma \left({\sum }_{i=1}^{n}{w}_{ij}{h}_{i}+{a}_{j}\right)$$

(17)

Whereas, the amount of hidden nodes has been designated by \(n\). This ratio of learning is described by \(\vartheta\). The function of logistic sigmoid has been established by \(sigm\). The momentum weight and the reduction of weight have been demonstrated by \(\alpha .\) The optimization procedure has been obtained by incorporating the gradient-development method using the re-propagation model. Assuming the complexity of tackling the variations and error function of the NP problems, conventional techniques are often used. Most of the performance optimization is understood by minimizing the Mean Square Error (MSE), which computes the variance between the expected value and the NN’s output, as exposed in the subsequent Eq. (18):

$$Error =\frac{1}{T}{\sum }_{j=1}^{N}{\sum }_{i=1}^{T}\left({D}_{j}\right(i)-{Y}_{j}(i){)}^{2}$$

(18)

Now, the data quantity is demonstrated by \(T\), and the amount of output layers has been established by \(N\). The amount of the \({j}^{th}\) the entities in DBNs output nodes at the time \(t\) is exemplified by \({D}_{j}\left(i\right)\). The \({j}^{th}\) feature of the beneficial quantity is proven by \({Y}_{j}\left(i\right)\). This model has been used for the DBN, in addition to its repetitions to attain the condition of stopping. Algorithm 1 indicates the DBN technique.

Performance validation

The performance validation of the IDLTHAR-PDST approach is inspected on the database31 ( doi: This dataset contains 12,000 instances in terms of 6 classes as depicted in Table 2.

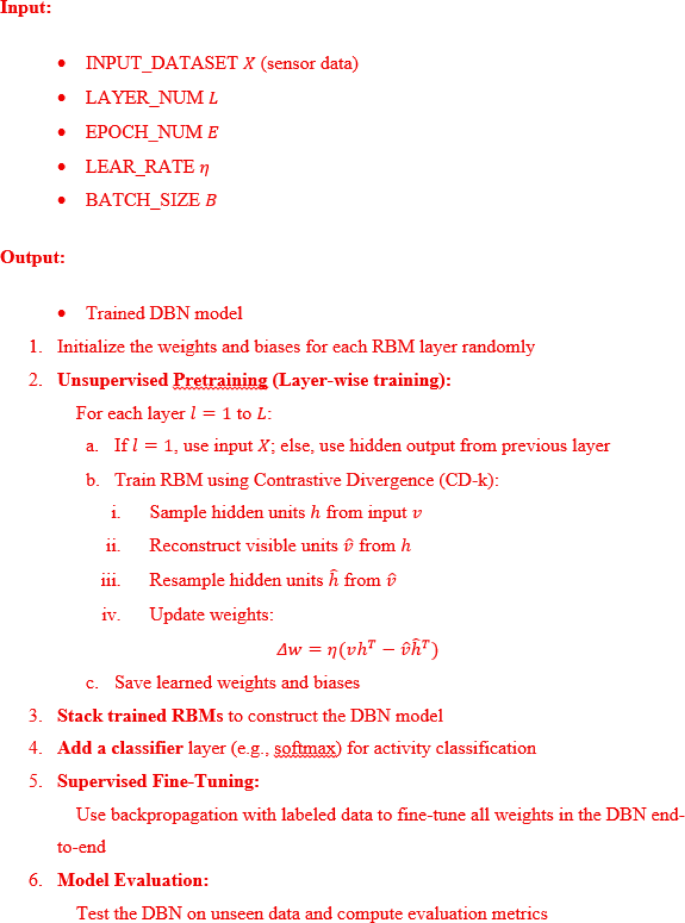

Figure 5 portrayed the confusion matrices made by the IDLTHAR-PDST approach below 80:20 and 70:30 of TRPH/TSPH. The results identify that the IDLTHAR-PDST approach has successful detection and identification of all 6 classes accurately.

Confusion matrices of (a–c) TRPH of 80% and 70% and (b–d) TSPH of 20% and 30%.

Table 3 describes the HAR of IDLTHAR-PDST method under 80:70 of TRPH and 20:30 of TSPH. The outcomes suggest that the IDLTHAR-PDST method appropriately recognized the samples. With 80%TRPH, the IDLTHAR-PDST method provides average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\) of 98.75%, 96.24%, 96.24%, 96.24%, and 97.74%, individually. Besides, with 20%TSPH, the IDLTHAR-PDST method gives average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 98.67%, 96.00%, 95.98%, 95.98%, and 97.59%, appropriately. Additionally, with 70%TRPH, the IDLTHAR-PDST methodology presents an average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 96.67%, 90.07%, 90.05%, 90.01%, and 94.03%, consistently. Eventually, with 30%TSPH, the IDLTHAR-PDST methodology provides average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 96.73%, 90.19%, 90.09%, 90.09%, and 94.07%, respectively.

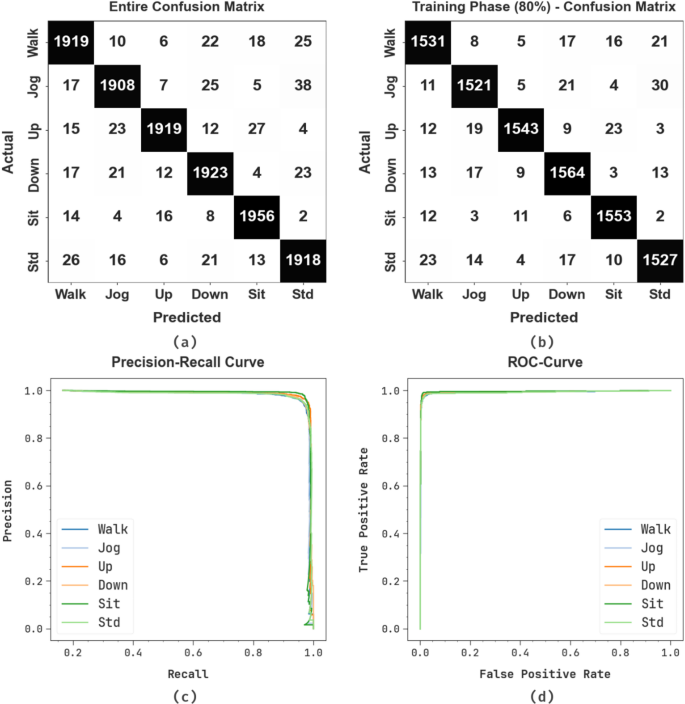

In Fig. 6, the training (TRA) \(acc{u}_{y}\) and validation (VAL) \(acc{u}_{y}\) results of the IDLTHAR-PDST approach below 80%TRPH and 20%TSPH are illustrated. The \(acc{u}_{y}\)values are calculated for 0–25 epochs. The figure discovered that the TRA and VAL \(acc{u}_{y}\) values exemplify developing tendencies which indicates the proficiencies of the IDLTHAR-PDST approach with heightened performance through dissimilar iterations. Additionally, the TRA and VAL \(acc{u}_{y}\)stays nearer through the epoch counts, which designates decreased overfitting and demonstrates better performance of the IDLTHAR-PDST model, assuring steady prediction on undetected samples.

\(Acc{u}_{y}\) curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH

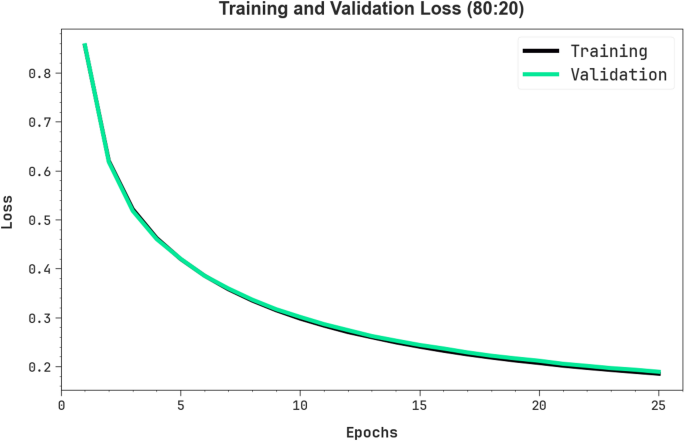

In Fig. 7, the TRA loss (TRALOS) and VAL loss (VALLOS) graph of the IDLTHAR-PDST approach on 80%TRPH and 20%TSPH is established. The loss values are computed for 0–25 epoch counts. It is signified that the TRALOS and VALLOS values exemplify declining tendencies, informing the abilities of the IDLTHAR-PDST technique in balancing an exchange between generality and data fitting. The incessant fall in loss values also pledges the advanced functioning of the IDLTHAR-PDST technique and tuning of the prediction outcomes gradually.

Loss curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

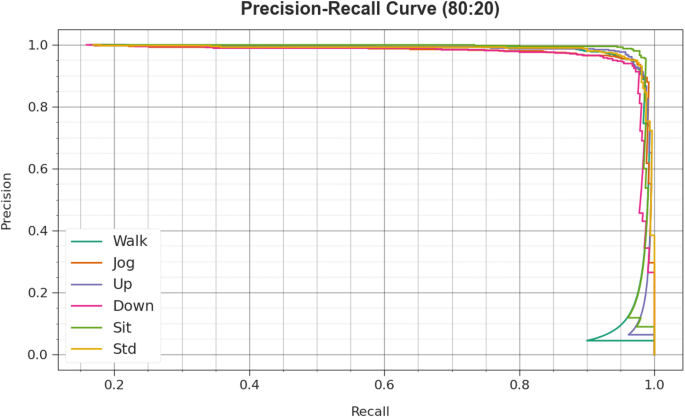

In Fig. 8, the precision-recall (PR) studies investigation of the IDLTHAR-PDST method in terms of 80%TRPH and 20%TSPH gives an understanding of its performance by Plott Precision against Recall for all class labels. This figure demonstrates that the IDLTHAR-PDST approach repetitively accomplishes improved PR outcomes through numerous classes, indicating its capabilities to keep a vital section of true positive predictions between all positive predictions (precision) but also seizing greater quantities of real positives (recall). The continuous growth in PR outcomes between each class depicts the competence of the IDLTHAR-PDST technique in the classifier process.

PR curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

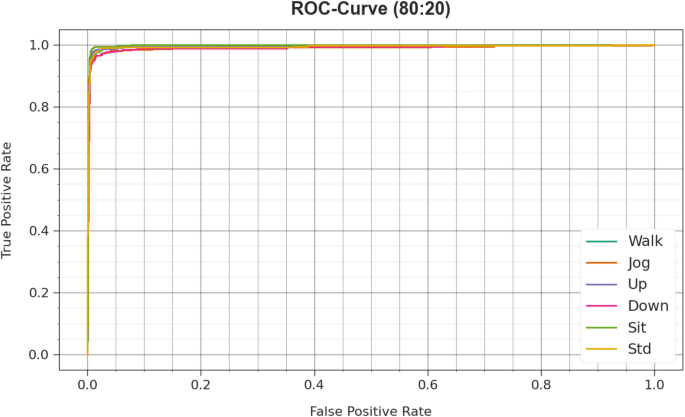

In Fig. 9, the ROC analysis of the IDLTHAR-PDST approach is examined. The results indicate that the IDLTHAR-PDST technique in terms of 80%TRPH and 20%TSPH achieves advanced ROC results across all classes, signifying major proficiency in differentiating the class labels. This steady tendency of boosted values of ROC across several classes directs the effective outcome of the IDLTHAR-PDST technique in predicting classes, discovering the stronger nature of the classifier process.

ROC curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

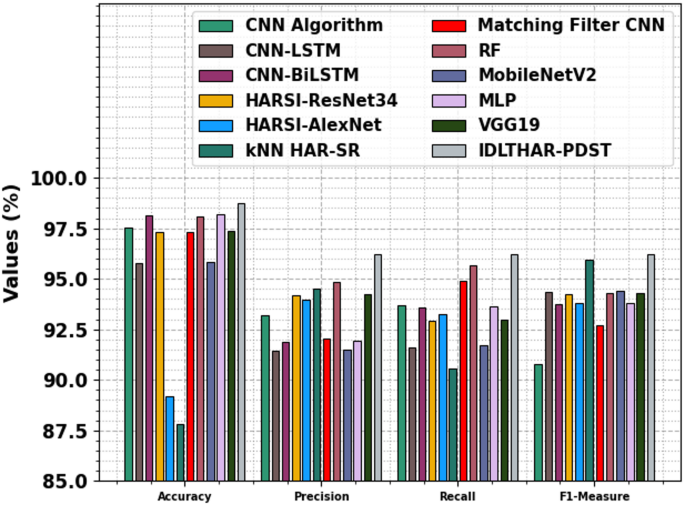

Table 4; Fig. 10 specifies the comparative results of the IDLTHAR-PDST technique with recent methodologies18,32,33,34. The results emphasized that the CNN, CNN-LSTM, CNN-BiLSTM, HARSI-ResNet34, HARSI-AlexNet, kNN HAR-SR, Matching Filter CNN, MobileNetV2, MLP, and VGG19 methodologies have specified poor performance. Meanwhile, the RF technique has acquired nearer results with \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l}\), and \({F1}_{measure}\) of 98.06%, 94.84%, 95.69%, and 94.32% individually. While the IDLTHAR-PDST approach identified better performance with maximum \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l}\), and \({F1}_{measure}\) of 98.75%, 96.24%, 96.24%, and 96.24% respectively.

Comparative analysis of IDLTHAR-PDST model with existing approaches.

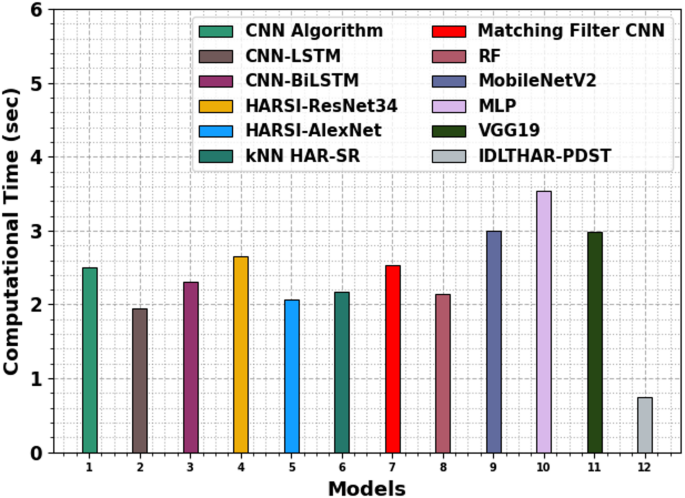

Table 5; Fig. 11 demonstrates the computational time (CT) analysis of the IDLTHAR-PDST model with existing approaches. The CNN model takes 2.51 s, while the CNN-LSTM model is slightly faster at 1.95 s. The CNN-BiLSTM model has a CT of 2.31 s. Among the DL models, HARSI-ResNet34 takes the longest time at 2.66 s, while HARSI-AlexNet is faster with a CT of 2.06 s. The kNN HAR-SR model has a CT of 2.17 s, and the Matching Filter CNN takes 2.53 s. The RF, MobileNetV2, MLP, and VGG19 model performs relatively efficiently with a CT of 2.14, 3.00, 3.54, and 2.99 s, while the IDLTHAR-PDST model outperforms with the fastest CT of 0.75 s.

CT analysis of IDLTHAR-PDST model with existing approaches.

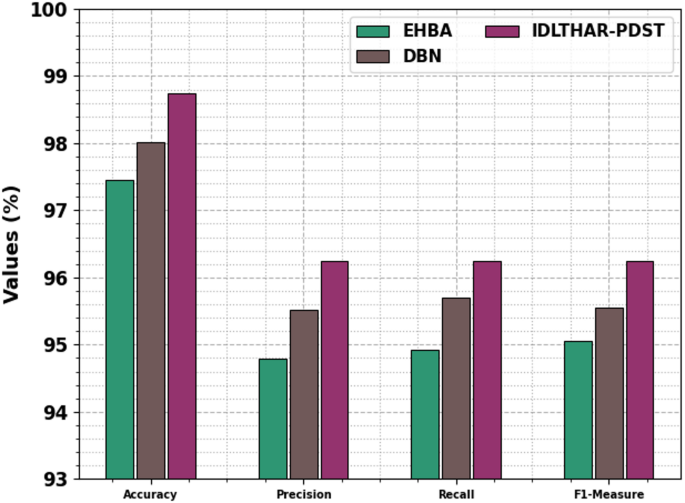

Table 6; Fig. 12 specifies the ablation study of the IDLTHAR-PDST technique. The EHBA model achieved an \(acc{u}_{y}\) of 97.46%, \(pre{c}_{n}\) of 94.79%, \(rec{a}_{l}\) of 94.93%, and an \({F1}_{measure}\) of 95.05%. The DBN technique showed improved outcomes with an \(acc{u}_{y}\) of 98.02%, \(pre{c}_{n}\) of 95.52%, \(rec{a}_{l}\) of 95.7%, and an \({F1}_{measure}\) of 95.55%. The IDLTHAR-PDST methodology outperformed both with an \(acc{u}_{y}\) of 98.75%, \(pre{c}_{n}\) of 96.24%, \(rec{a}_{l}\) of 96.24%, and an \({F1}_{measure}\) of 96.24%. These results demonstrate that the components added in the IDLTHAR-PDST methodology contribute significantly to enhancing overall performance. This consistent improvement across all metrics highlights the efficiency of the model and confirms the robustness of the final model in multi-class classification scenarios.

Result analysis of the ablation study of IDLTHAR-PDST technique.

link