Designing an innovative Multi-Criteria Decision Making (MCDM) framework for optimized teaching and delivery of physical education curriculum

The methodology adopted in this study provides a structured and rigorous approach for developing a MCDM framework tailored to enhance the teaching and delivery of PE curricula. It involves a systematic integration of theoretical insights, stakeholder inputs, and analytical modeling techniques to ensure the framework’s relevance, validity, and applicability. The research process is organized into key phases: identification and classification of decision criteria, selection of an appropriate MCDM technique, instrument design, and structured data collection. Each phase is designed to ensure transparency, objectivity, and methodological coherence. The framework is grounded in both literature-based knowledge and empirical stakeholder engagement to reflect real-world educational needs. This section details each stage of the methodological process.

Research design

The research design of this study is strategically formulated to develop a comprehensive MCDM framework for optimizing the teaching and delivery of PE curricula. Recognizing the multifactorial and context-sensitive nature of physical education, the study employs a design-based, mixed-methods approach that integrates both qualitative and quantitative techniques. This blended strategy facilitates a deeper understanding of educational complexities while enabling structured analysis and evaluation of diverse decision factors. The design involves an iterative process of literature synthesis, stakeholder engagement, and modeling, with each phase contributing to the development and refinement of the proposed decision-support framework. Emphasis is placed on contextual validity, methodological rigor, and practical applicability, ensuring that the resulting model is both evidence-informed and adaptable to various educational environments. The use of MCDM further supports this goal by providing a robust analytical foundation for integrating multiple, and often conflicting, decision criteria in a rational and transparent manner.

Research paradigm

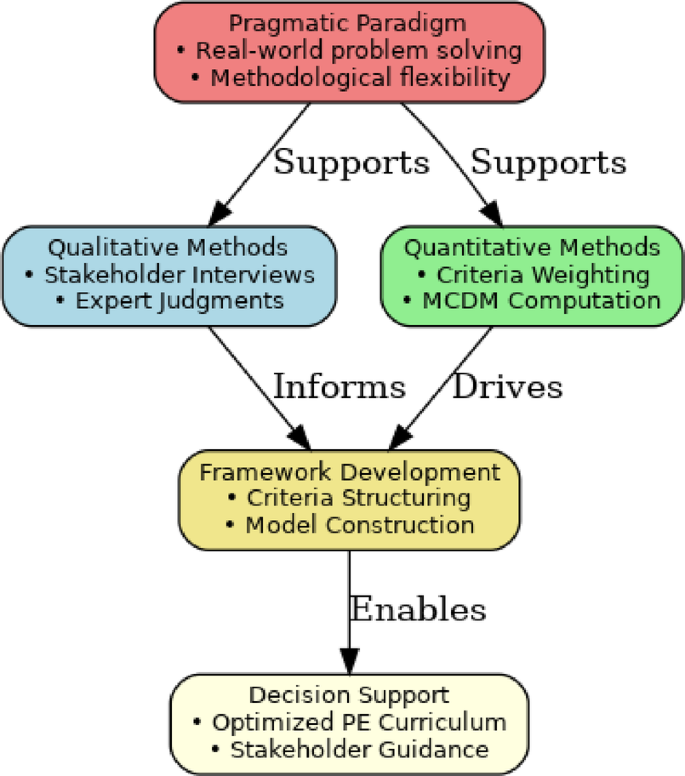

This study is grounded in the pragmatic research paradigm, which emphasizes the use of diverse methods to address complex, real-world problems. Pragmatism allows researchers to draw upon the strengths of both positivist (quantitative) and constructivist (qualitative) approaches to generate actionable knowledge. Given that the aim of this research is to produce a practical decision-making tool that accounts for both measurable outcomes and stakeholder values, pragmatism offers the most suitable philosophical foundation. Within this paradigm, the mixed-methods approach becomes a vehicle for methodological triangulation. Quantitative components (e.g., criteria weighting and scoring using MCDM techniques) are complemented by qualitative insights gathered through expert interviews and stakeholder consultations. The integration of these perspectives enables a richer, more nuanced framework that reflects the realities of curriculum planning and instructional delivery in physical education.

Methodological approach

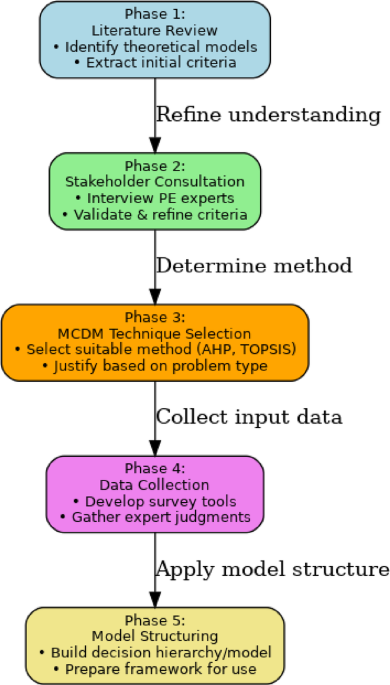

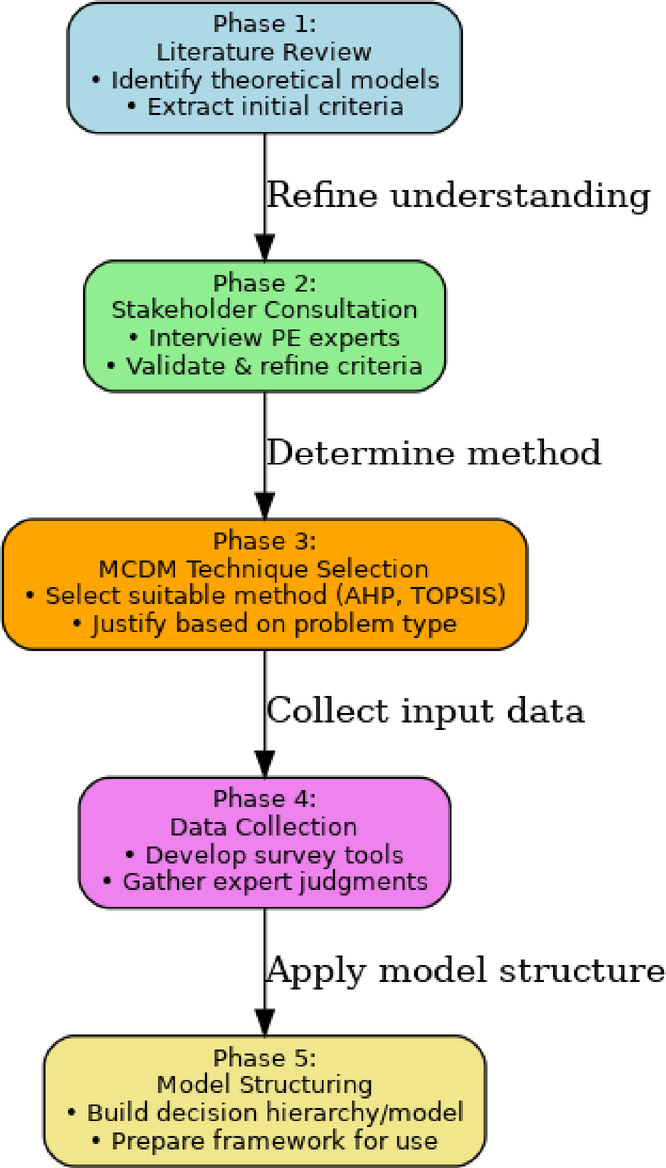

The methodological approach adopted in this study follows a sequential, multi-phase process designed to ensure logical coherence and empirical robustness. The process is divided into the following major stages:

-

1.

Literature review and preliminary criteria identification.

-

2.

A comprehensive review of academic literature, institutional guidelines, and global PE standards was conducted to extract a broad list of potential decision criteria.

-

3.

Stakeholder engagement and criteria refinement.

-

4.

Using expert interviews and structured workshops, the preliminary criteria were reviewed, validated, and refined based on relevance, feasibility, and context.

-

5.

MCDM technique selection.

-

6.

Based on problem characteristics and decision complexity, an appropriate MCDM technique (e.g., AHP or Fuzzy TOPSIS) was selected. The decision matrix and framework structure were designed accordingly.

-

7.

Instrument development and data collection.

-

8.

Data collection instruments, including pairwise comparison matrices and evaluation forms, were developed and administered to a sample of subject matter experts.

-

9.

Model construction and evaluation.

-

10.

The collected data were processed to compute criteria weights and simulate decision scenarios. The MCDM framework was structured using hierarchical modeling and tested for internal consistency.

This structured approach ensures that the framework development is both data-informed and stakeholder-aligned, providing a reliable basis for curriculum-related decision-making in the context of physical education.

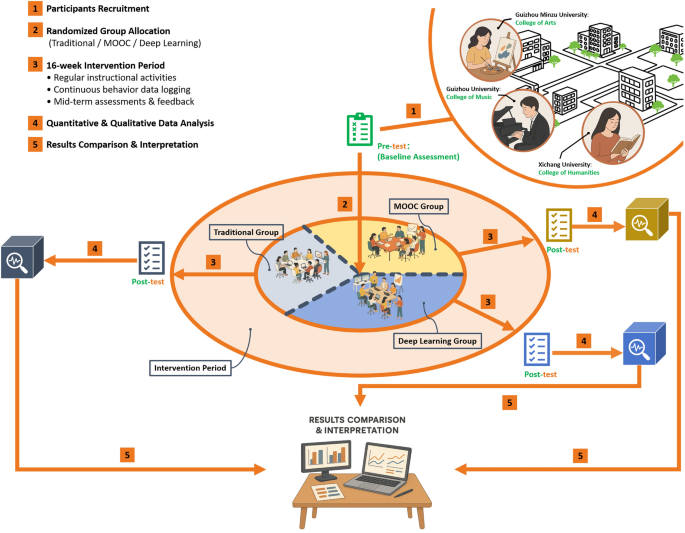

Sequential methodological phases for developing the MCDM framework.

The overall methodology for developing the MCDM framework was organized into five structured phases, beginning with literature review and culminating in model structuring (see Fig. 1). As depicted in Fig. 2, the theoretical foundation emphasizes methodological flexibility, where stakeholder insights and computational techniques converge to inform the MCDM framework for optimized PE curriculum delivery.

Theoretical foundation of the research design based on the pragmatic paradigm.

To ensure methodological rigor and coherence in the development of the MCDM framework, the research followed a structured five-phase process. These phases ranged from an initial literature review to the final model structuring. Each phase was designed to progressively build upon the previous one—beginning with the identification of theoretical foundations and criteria, followed by stakeholder consultation for validation, the selection of an appropriate MCDM technique, systematic data collection, and culminating in the construction of a functional decision-making model. Table 1 provides a comprehensive summary of these methodological phases, detailing the associated techniques and anticipated outcomes at each stage of the framework’s development.

Criteria identification and structuring

Literature-Driven criteria extraction

The identification of evaluation criteria forms the backbone of any effective MCDM framework. In this study, a rigorous literature-driven approach was employed to extract relevant criteria that influence the teaching and delivery of PE curricula. This phase aimed to establish a comprehensive set of indicators—both qualitative and quantitative—that reflect the multifaceted goals of PE, including cognitive development, motor skill acquisition, social interaction, inclusivity, and long-term fitness orientation. To achieve this, an extensive review of academic journals, policy reports, educational standards (e.g., UNESCO PE guidelines), and prior MCDM applications in education was conducted. The search strategy focused on sources published within the last 15 years using key terms such as “physical education effectiveness,” “curriculum delivery,” “teaching quality,” “student engagement in PE,” and “MCDM in education.” This process yielded a robust initial pool of 21 distinct criteria, which were later refined based on overlap, relevance, and clarity. To establish a theoretically grounded foundation for the decision-making framework, a comprehensive literature review was conducted across domains of physical education, pedagogy, and MCDM. This process resulted in the extraction of multiple relevant criteria grouped into five key dimensions: Pedagogical Effectiveness, Student Engagement, Resource Availability, Inclusivity and Accessibility, and Curriculum Flexibility. These preliminary criteria are summarized in Table 2 and served as the starting point for expert validation during the stakeholder consultation phase. The MCDM Framework dataset, publicly available on Figshare (DOI: provides detailed stakeholder weights, instructional method scores, and pairwise comparison data to support advanced research in optimizing Physical Education curriculum delivery. It can be freely downloaded and used for academic and decision-making research purposes.

Stakeholder interviews and workshops

Following the initial literature-driven extraction of evaluation criteria, stakeholder engagement was conducted to validate, refine, and contextualize the preliminary list. Given the multidimensional nature of PE delivery, it was essential to gather insights from those directly involved in planning, executing, and experiencing the PE curriculum.

Structured interviews and focus group workshops were organized with a diverse group of stakeholders, including:

-

Physical education teachers, who provided pedagogical perspectives and classroom-level challenges.

-

Curriculum experts, who evaluated alignment with national and institutional standards.

-

School administrators, who addressed feasibility, policy, and resource constraints.

-

Students, who shared their lived experiences, engagement levels, and preferences.

Participants were asked to assess the relevance, clarity, and practical applicability of the proposed criteria. Open-ended feedback was also collected to capture emerging or overlooked aspects. The feedback obtained was synthesized and used to revise the initial criteria pool—resulting in a reduced, more actionable list of core indicators suited for integration into the MCDM model.

Criteria grouping into dimensions

After the validation phase, the refined set of criteria was organized into five logical dimensions to facilitate structured decision modeling. This classification ensured conceptual clarity, ease of weighting during MCDM analysis, and better alignment with the operational priorities of educational institutions. The foundation of the MCDM framework is structured around five core evaluation dimensions that reflect the multifaceted nature of effective physical education delivery. These dimensions were derived from both literature and stakeholder insights to ensure theoretical validity and contextual relevance. As outlined in Table 3, the dimensions include Pedagogical Quality, Resource Availability, Student Engagement, Curriculum Flexibility, and Inclusivity & Accessibility. Each dimension provides a focused lens through which sub-criteria are evaluated and instructional strategies are assessed.

MCDM technique selection

Technique justification

The success of a MCDM framework depends heavily on selecting a method that aligns with the nature of the decision problem, the type and precision of data available, the number of alternatives and criteria involved, and the intended use of the decision model. Given the complex, multi-dimensional, and partially subjective nature of PE curriculum design and delivery, the choice of MCDM technique must support both qualitative judgments and quantitative evaluations.

In this study, the AHP was selected as the core MCDM technique for several key reasons:

-

1.

Hierarchical structure compatibility: AHP supports decision problems that can be structured into a hierarchy, which is ideal for organizing dimensions such as Pedagogical Quality, Student Engagement, and Resource Availability, each with associated sub-criteria.

-

2.

Pairwise comparison strength: AHP excels in converting subjective expert opinions into quantifiable weights using pairwise comparisons. This is particularly beneficial when dealing with human-centered criteria such as motivation, inclusivity, and instructional effectiveness.

-

3.

Consistency verification: One of AHP’s major advantages is its built-in Consistency Ratio (CR) check, which ensures logical coherence in expert judgments—a crucial quality control step when collecting data from multiple stakeholders.

-

4.

Stakeholder-friendly: AHP is relatively easy for decision-makers to understand and apply in practical settings, especially in workshops involving teachers, administrators, and education specialists with diverse backgrounds.

-

5.

Scalability and integration: AHP can be expanded or integrated with fuzzy logic (Fuzzy AHP) if the need arises to handle greater ambiguity or vagueness in input judgments.

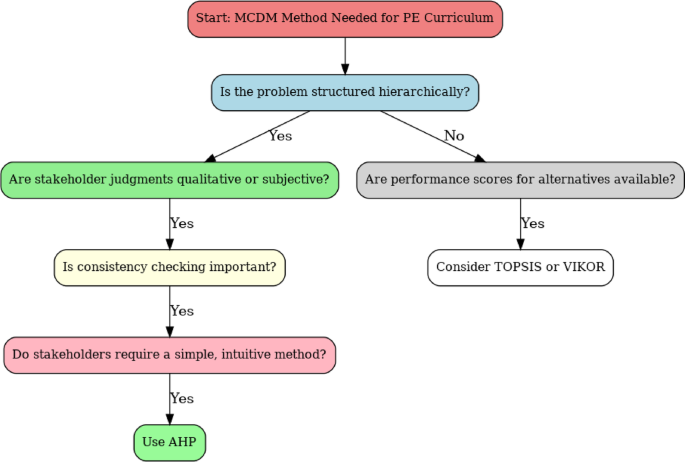

Alternative methods like TOPSIS and VIKOR were considered for their ability to rank alternatives based on ideal or compromise solutions, but these require performance scores on all alternatives—which are not always available or easily defined in education settings. Fuzzy AHP was also reviewed, but given that stakeholder inputs in this study were elicited in crisp, structured form through pairwise comparisons, the standard AHP technique was deemed sufficient.

To ensure methodological alignment with the objectives and structure of the study, several MCDM techniques were evaluated prior to selecting the final approach. These included AHP, TOPSIS, VIKOR, and Fuzzy AHP. As summarized in Table 4, AHP was deemed the most appropriate due to its ability to accommodate hierarchical decision problems, incorporate expert input through pairwise comparisons, and validate the logical consistency of judgments. While other methods offered certain advantages—such as ranking proximity (TOPSIS) or compromise solutions (VIKOR)—they were less compatible with the data format and stakeholder profiles in this study.

MCDM technique selection flow for PE curriculum optimization.

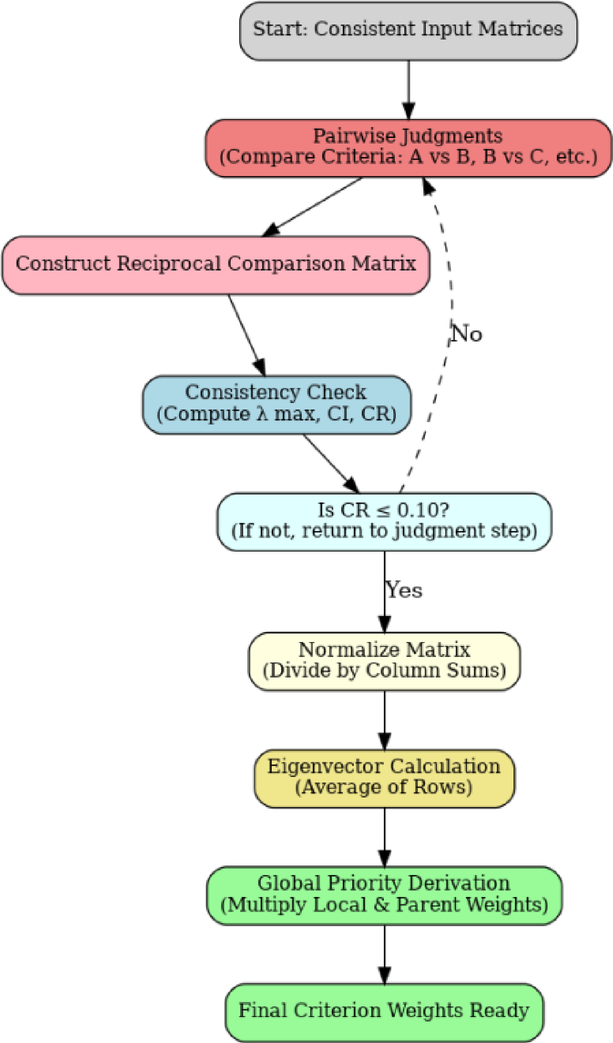

As illustrated in Fig. 3, the decision to use AHP was justified by the hierarchical nature of the problem, the need for consistency checks, and the preference for an intuitive method among stakeholders.

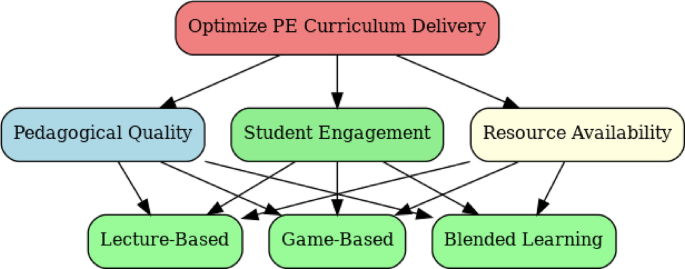

Framework modeling

The construction of the decision-making framework is a critical phase in the development of a robust MCDM system, as it defines how various criteria and sub-criteria interact and contribute to the evaluation of alternative strategies. In this study, the AHP was used to structure the decision model into a three-level hierarchical framework, enabling transparent and consistent evaluation of teaching and delivery methods for the PE curriculum.

At the top level, the overarching goal of the model is defined as:

Optimize Teaching and Delivery of the Physical Education Curriculum.

The second level consists of the five main decision dimensions, identified and validated in earlier stages of the methodology:

-

1.

Pedagogical quality.

-

2.

Student engagement.

-

3.

Resource availability.

-

4.

Curriculum flexibility.

-

5.

Inclusivity & accessibility.

Each of these dimensions is further decomposed into sub-criteria at the third level, representing more specific evaluation aspects. For instance:

-

Pedagogical quality includes Instructional Effectiveness and Assessment Alignment.

-

Student engagement includes Motivation and Participation.

-

Resource availability includes Facility Access and Time Allocation.

-

Curriculum flexibility includes Interdisciplinary Integration and Innovative Content.

-

Inclusivity & accessibility includes Gender Equity and Support for Special Needs.

The bottom layer of the model includes alternative PE teaching and delivery strategies, which will be compared and ranked based on their performance against the weighted criteria and sub-criteria. These alternatives may include, for example:

-

Lecture-based instruction.

-

Game-based learning.

-

Blended PE (Technology + physical sessions).

-

Project-Based physical activities.

The hierarchical model ensures that expert judgments regarding each criterion’s importance and the performance of each alternative can be consistently incorporated through pairwise comparisons. Once the hierarchy is finalized, AHP computational steps will be applied to derive normalized weights and generate a ranked preference order for the strategies. As shown in Fig. 4, the hierarchical decision framework connects the primary goal with three core criteria—Pedagogical Quality, Student Engagement, and Resource Availability—and evaluates their influence across selected instructional strategies.

Hierarchical decision framework for PE curriculum optimization.

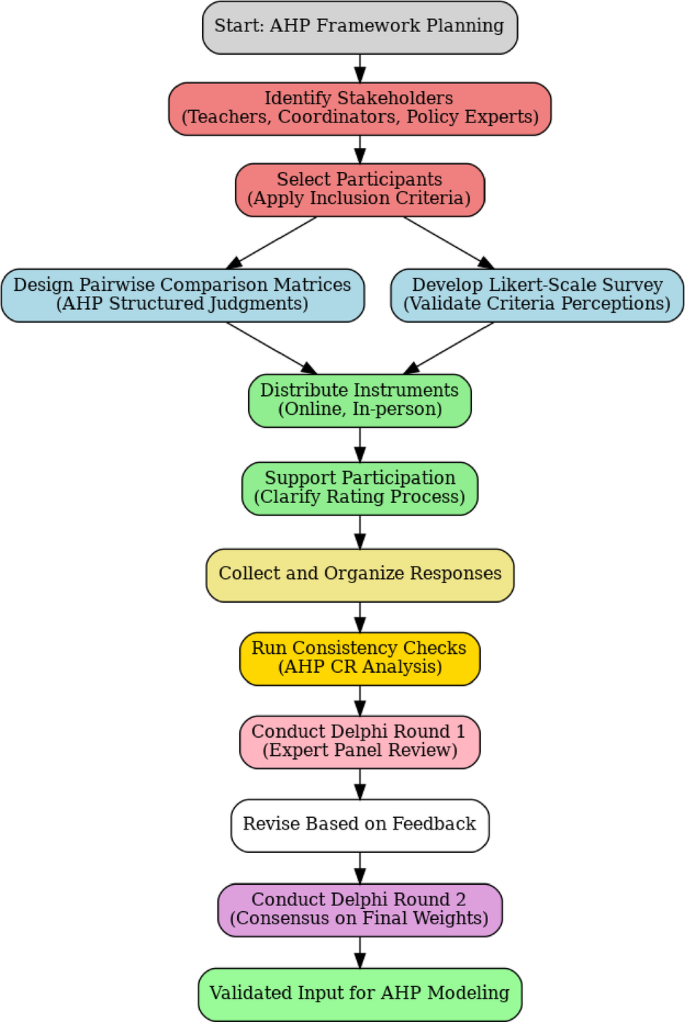

Instrument design and data collection

This section describes the procedures adopted for participant selection, instrument development, and data collection in the context of building an AHP-based MCDM framework for optimizing PE curriculum delivery. The aim was to ensure high-quality input from relevant stakeholders and maintain methodological rigor across all data collection activities.

Participant selection

To ensure that the MCDM model reflects the practical realities of curriculum delivery and policy implementation in physical education, a purposive sampling strategy was used to select participants with domain expertise and direct involvement in PE program design, delivery, and assessment.

The criteria for participant inclusion were as follows:

-

Professional role: Individuals directly involved in teaching, curriculum planning, or policy guidance for PE.

-

Experience: Minimum of 3 years of experience in physical education or educational leadership.

-

Institutional diversity: Representation from both public and private sector schools, colleges, and sports institutions.

While this study primarily involved PE teachers, academic coordinators, and policy advisors for the development and validation of the MCDM framework, we acknowledge the absence of direct student perspectives as a limitation. Students, being the primary beneficiaries of PE programs, offer critical insights into engagement, inclusivity, and learning experience—factors that significantly affect the effectiveness of instructional strategies. Although indirect student feedback was considered through expert interpretation and educational policy documents, future iterations of this research will aim to incorporate structured student input through surveys, focus groups, or participatory design methods to enrich the framework’s validity and practical relevance.

To ensure the relevance and credibility of the evaluation criteria and decision model, a diverse panel of experts was engaged in the study. These participants were drawn from three primary stakeholder groups: PE teachers, academic coordinators, and policy advisors. As shown in Table 5, the group included individuals with practical teaching experience, curriculum design expertise, and policy-level insight, thereby offering a well-rounded foundation for criterion validation and strategy evaluation.

Survey and questionnaire design

To capture expert input on the importance of various decision criteria and sub-criteria, two structured instruments were developed:

-

1.

Pairwise Comparison Matrices (AHP Method).

-

2.

These were used to derive relative weights for decision criteria. Each participant was asked to compare two criteria at a time on a 1–9 importance scale as per Saaty’s AHP methodology. The criteria included both the five main dimensions and their corresponding sub-criteria.

-

3.

Likert-Scale Survey (5-point scale).

-

4.

This supplemental survey measured perceptions of relevance, feasibility, and impact of each criterion. It provided validation for the final set of prioritized criteria used in the decision hierarchy.

All instruments were pilot-tested with two external experts to ensure clarity, consistency, and response time efficiency.

Data collection procedure

Data were collected over a two-week period using a hybrid approach to maximize participation while maintaining standardization:

-

Online format: Pairwise comparison matrices and surveys were deployed using Google Forms and Microsoft Excel templates with built-in validation features.

-

In-person sessions: For participants preferring guided sessions, in-person meetings were conducted to ensure accurate understanding of pairwise comparison tasks.

-

Delphi technique (Round 2): After initial analysis, a second round of validation was conducted with selected experts to review consistency ratios and approve the final weight allocations.

All data collection activities followed ethical research protocols, including informed consent, data confidentiality, and the option to withdraw at any time. To ensure the robustness and clarity of the input data, multiple instruments were employed during the expert judgment collection phase. These included pairwise comparison matrices for deriving criterion weights, Likert-scale surveys to assess the clarity and relevance of proposed criteria, and Delphi feedback sheets for final consensus building among selected experts. As presented in Table 6, each instrument was tailored to match the task and participant group, ensuring consistency, efficiency, and methodological rigor throughout the data collection process.

Sequential workflow for developing the AHP-Based MCDM framework.

As illustrated in Fig. 5, the development workflow integrates structured judgment collection, iterative feedback, and validation mechanisms to ensure methodological rigor and stakeholder alignment in the AHP modeling process.

Criteria weighting and preference aggregation

Once the data was collected through pairwise comparison matrices and Likert-scale surveys, the next step was to ensure the validity, consistency, and statistical soundness of the input before proceeding to derive the final decision weights. This section outlines the preprocessing methods used to validate expert responses and the computational procedures applied to generate priority weights for each criterion in the AHP hierarchy.

Data preprocessing and consistency checks

In the AHP method, consistency of judgments is paramount to ensure that the expert input can be reliably used to derive weights. During preprocessing, all pairwise comparison matrices submitted by participants were first screened for completeness and logical consistency.

The following steps were followed:

-

Matrix completeness check: Ensured that all required comparisons were filled in the reciprocal matrix format.

-

Consistency Ratio (CR) calculation: For each matrix, the Consistency Index (CI) was computed using Saaty’s method and compared against the Random Index (RI) to derive the CR:

$$\:CR=\:\frac{CI}{RI}\:\text{W}\text{h}\text{e}\text{r}\text{e}\:CI=\:\frac{{\varvec{\uplambda\:}}_{max-n}}{n-1}$$

-

Acceptable threshold: A CR ≤ 0.10 was considered acceptable. Matrices exceeding this threshold were flagged, and respondents were either asked for clarification or their input was excluded from final aggregation.

This step ensured that only internally coherent judgments were used in computing criterion weights.

As part of the data validation process, all submitted pairwise comparison matrices were subjected to consistency analysis using Saaty’s AHP methodology. This evaluation ensured logical coherence in expert judgments before proceeding to weight derivation. As shown in Table 7, matrices with a CR ≤ 0.10 were accepted, while those exceeding the threshold were either flagged for re-evaluation or excluded from the final analysis. This step enhanced the reliability and methodological soundness of the resulting AHP model.

Weight derivation

After ensuring consistency, the next step involved deriving weights for each criterion and sub-criterion using the Eigenvector Method within the AHP process.

Steps included:

-

1.

Pairwise matrix aggregation: Individual matrices were geometrically averaged to generate a group decision matrix.

-

2.

Normalized priority vector calculation: Each row of the matrix was divided by the column total to yield a normalized matrix.

-

3.

Weight extraction: The average of each row in the normalized matrix was computed to yield the relative weight of each criterion.

-

4.

Weight propagation: Sub-criteria weights were multiplied by their parent criterion weight to calculate the global priority for final decision-making.

As shown in Fig. 6, the AHP weight derivation process involves constructing reciprocal matrices, validating consistency (CR ≤ 0.10), and computing eigenvectors to obtain local and global weights for each criterion.

AHP weight derivation process.

Ethical considerations

Upholding ethical standards is a critical aspect of any research involving human participants, particularly in decision-making studies that rely on expert input and judgment. This study adhered to institutional and international ethical guidelines throughout the design and data collection phases. The key ethical considerations included obtaining informed consent, ensuring voluntary participation, and implementing strict measures for data privacy and participant anonymity.

Informed consent

Prior to engaging in any form of data collection, all participants were provided with a detailed Participant Information Sheet (PIS) outlining the purpose of the research, the methodology, their expected role in the study, and the use of collected data. The document clearly stated that:

-

Participation was entirely voluntary, with no obligation to continue at any point.

-

Participants could withdraw from the study at any stage without providing a reason.

-

No personally identifiable information would be linked to their responses.

Participants were required to sign an Informed Consent Form (ICF) confirming that they understood the information presented, agreed to participate voluntarily, and acknowledged their rights. For online data collection, consent was digitally recorded through mandatory checkboxes before the survey could proceed.

Data privacy and anonymity

To ensure confidentiality and protect participants’ identities, the following data protection strategies were implemented:

-

Anonymization: All survey and matrix responses were coded using unique identifiers, ensuring that data could not be traced back to individuals.

-

Secure storage: Data files were stored in password-protected folders on encrypted institutional servers, accessible only to the research team.

-

No Attribution in reporting: In all tables, figures, and analyses, responses were presented in aggregate form or anonymized using participant IDs (e.g., EXP01, EXP02).

-

Ethics approval: The research protocol, consent forms, and data collection tools were submitted to the institutional ethics review board and received formal approval prior to implementation.

Ethical considerations were a central component of this study, particularly given its reliance on expert input for decision modeling.

link