A deep learning-based intelligent curriculum system for enhancing public music education: a case study across three universities in Southwest China

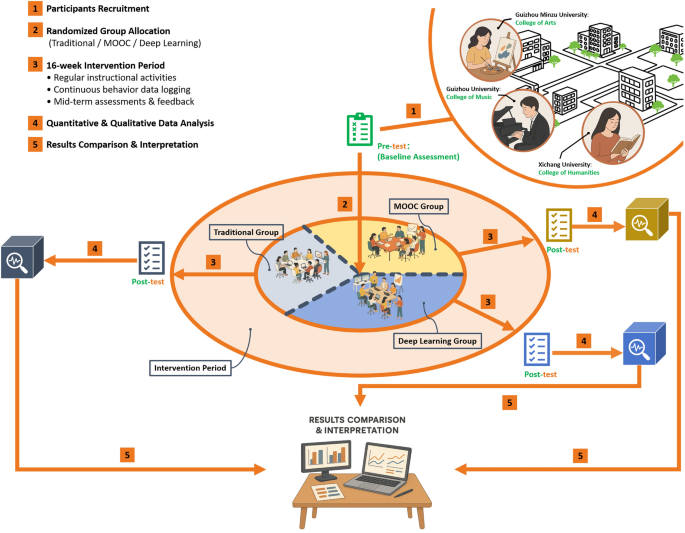

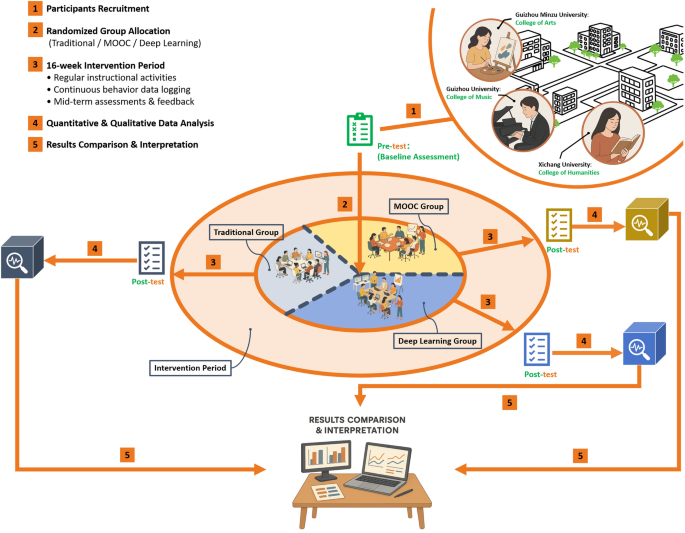

Research design and case selection

This study employed a semester-long, controlled experimental approach to investigate how deep learning-enhanced methods could improve public music education efficiency at comprehensive universities in Southwest China. To ensure regional representativeness and practical validity, three distinct institutions were purposively selected: the College of Arts at Guizhou Minzu University, the College of Music at Guizhou University, and the College of Humanities at Xichang University. These institutions represent different regional educational contexts and varying levels of institutional resources, enabling generalizable insights across diverse university settings.

Participants included a total of 113 first-year undergraduate students enrolled in non-music major classes across the three universities. To ensure methodological rigor and comparability, the following inclusion criteria were applied:

-

1.

Academic Status: First-year undergraduate students enrolled in mandatory public music education courses

-

2.

Musical Background: No formal music training exceeding 2 years or professional music education background

-

3.

Age Range: 18–22 years old to ensure developmental homogeneity

-

4.

Technology Access: Reliable access to mobile devices and internet connectivity for platform interaction

-

5.

Informed Consent: Voluntary participation with signed informed consent forms

Prior to the experimental intervention, comprehensive baseline assessments were conducted to validate homogeneity among participants and enable proper statistical analysis. The baseline evaluation included:

-

Musical Proficiency Pre-test: A standardized 50-item assessment covering rhythm recognition, pitch identification, basic music theory, and listening comprehension, scored on a 0–1 normalized scale

-

Demographic Questionnaire: Age, gender, academic major, prior musical experience, and socioeconomic background

-

Learning Style Inventory: Visual, auditory, and kinesthetic learning preference assessment using Kolb’s Learning Style Inventory

-

Technology Familiarity Survey: Self-reported comfort level with digital learning platforms and mobile applications

-

Musical Interest and Motivation Scale: 5-point Likert scale assessment of intrinsic motivation toward music learning

Stratified randomization was employed to ensure balanced group allocation across key demographic and academic variables. Participants were first stratified by university, gender, and academic major, then randomly assigned to experimental conditions within each stratum using computer-generated random numbers.

Subsequently, students were systematically randomized into three distinct instructional groups within each university:

-

1.

A control group using traditional face-to-face instructional methods;

-

2.

A blended instruction group that combined traditional teaching with MOOC-based supplementary resources;

-

3.

An experimental group employing a mobile cloud-based music education platform integrated with deep learning algorithms (LSTM and Transformer architectures) for personalized instruction and recommendations.

The intervention was conducted over a 16-week period, aligning with the duration of a complete academic semester. Granular, continuous data logging was employed to systematically capture students’ learning behaviors, encompassing metrics such as video viewing durations, frequencies of interactive engagement, chapter completion rates, and performance outcomes on assessments. A formative assessment, administered at the midpoint of the semester, served to deliver interim feedback, enabling instructors to make evidence-based adjustments to their pedagogical strategies. Upon conclusion of the intervention, participants undertook a comprehensive post-test designed to objectively measure advancements in music proficiency. Furthermore, an anonymous survey was distributed to elicit qualitative insights, probing students’ levels of satisfaction, engagement, perceived instructional effectiveness, and evaluations of the digital platform’s usability, thereby enriching the understanding of the learning experience.

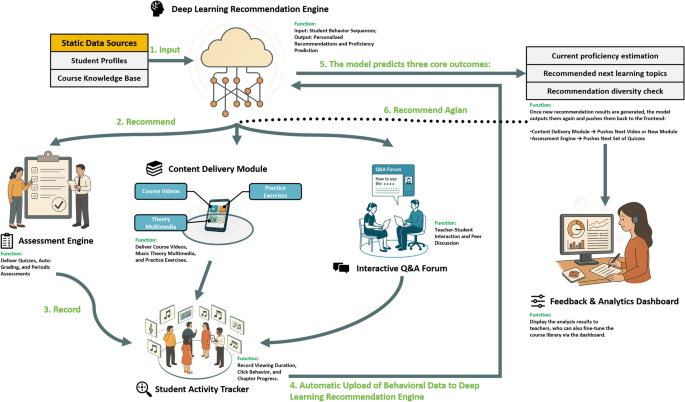

The experimental framework, which included group allocation, instructional design, data collection methodologies, and the sequence of evaluative measures, is illustrated in Fig. 1. Comprehensive demographic profiles and specific group assignments for participants from each of the collaborating institutions–Guizhou Minzu University, Guizhou University, and Xichang University–are meticulously documented in Table 1. These elements collectively underpin the study’s methodological rigor, ensure transparency, and facilitate the potential for replication across varied institutional contexts, thereby contributing to the robustness and generalizability of the findings.

Overall Experimental Design and Participant Workflow.

Platform architecture and deep learning modules

To meet the increasing demand for personalized, scalable, and efficient music education in comprehensive universities, we developed a mobile cloud-based platform that integrates modular educational services with intelligent deep learning-driven recommendation systems. The platform was specifically designed to address the challenges of student heterogeneity, limited faculty resources, and real-time instructional adaptation in public music education. This section elaborates on the system architecture, the embedded deep learning framework, and the computational models used for behavior prediction and content recommendation.

Platform architecture overview

The overall architecture of the mobile music education cloud platform is composed of five major service layers and an intelligent backend engine. The system structure is visualized in Fig. 2, which presents the data flow and modular interactions. The key modules include:

Mobile Music Education Cloud Platform.

-

1.

Content Delivery System: Serves curated instructional videos, multimedia music examples, and interactive sheet music exercises;

-

2.

Student Activity Tracker: Monitors and records fine-grained behavioral metrics such as watch duration, quiz attempts, playback rate, response latency, and platform navigation;

-

3.

Assessment Engine: Issues formative and summative quizzes, auto-scores results, and generates knowledge proficiency snapshots;

-

4.

Feedback and Analytics Dashboard: Provides real-time visual feedback to students and instructors about progress, recommendation triggers, and usage patterns;

-

5.

Interactive Q&A Forum: Enables asynchronous engagement with instructors and peers for collaborative learning and clarification.

The backend connects to two static data sources: a Course Knowledge Base, which defines topic dependencies and semantic content metadata, and Student Profile Data, which includes demographics, academic history, and musical background. These components collectively interact with the Deep Learning Recommendation Engine, which continuously processes behavioral sequences and dynamically updates personalized learning paths. The entire system is deployed through both mobile and web interfaces, ensuring seamless access across devices.

Deep learning model architecture

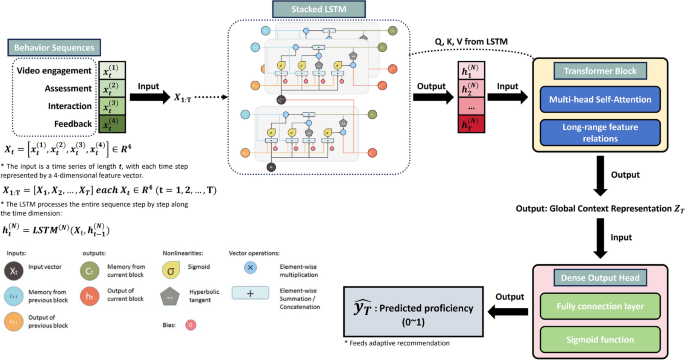

The core of the intelligent system is a deep sequence modeling architecture designed to predict each student’s future music proficiency and recommend optimal content accordingly. As illustrated in Fig. 3, the architecture consists of the following components:

Deep Learning Sequence Model for Student Proficiency Prediction.

-

1.

Input Layer: The model takes as input a time-series of multivariate feature vectors \(\textbf{x}_t \in \mathbb {R}^d\) that represent student activity at each time step t. These features include:

-

Video engagement: duration, replays, playback speed.

-

Assessment data: scores, attempt count, error types.

-

Interaction frequency: page switches, scrolls, Q&A posts.

-

Historical recommendation feedback: click-throughs, dismissals.

Prior to sequence encoding, each \(\textbf{x}_t\) is optionally normalized and, if necessary, projected to a common latent dimension to ensure feature compatibility.

-

-

2.

LSTM Encoder: A stacked Long Short-Term Memory (LSTM) network processes the behavioral sequence to capture temporal dependencies and evolving learning patterns:

$$\begin{aligned} \textbf{h}_t = \text {LSTM}(\textbf{x}_t, \textbf{h}_{t-1}) \end{aligned}$$

(1)

The hidden state \(\textbf{h}_t\) encodes the student’s latent knowledge state and behavior dynamics by combining past and current interactions. The LSTM’s internal gating mechanism (input, forget, and output gates) allows the network to retain or discard information at each step, which is critical for modeling long-range dependencies.

-

3.

Transformer Block: The entire sequence of LSTM hidden states \(\{\textbf{h}_1, \dots , \textbf{h}_T\}\) is then fed into a multi-head self-attention Transformer block to enhance long-range pattern recognition and inter-feature relationships:

$$\begin{aligned} \text {Attention}(Q, K, V) = \text {softmax}\!\Big (\frac{QK^\top }{\sqrt{d_k}}\Big ) V, \end{aligned}$$

(2)

where queries Q, keys K, and values V are learned linear projections of the LSTM outputs.

-

4.

Dense Output Head: The final encoded representation \(\textbf{z}_T\) from the Transformer block is passed through a fully connected layer with sigmoid activation to yield a scalar proficiency prediction:

$$\begin{aligned} \hat{y}_T = \sigma (\textbf{w}^\top \textbf{z}_T + b), \quad \hat{y}_T \in [0, 1]. \end{aligned}$$

(3)

This output \(\hat{y}_T\) represents the estimated mastery probability for the current course level. In deployment, the predicted score \(\hat{y}_T\) directly determines the selection and ranking of recommended modules for the student, balancing proficiency fit and content diversity as enforced by the additional loss term.

Model objective function and optimization

To train the deep learning model for student proficiency prediction and adaptive content recommendation, we define a formal objective based on student interaction sequences and post-intervention proficiency scores.

Let the training dataset be denoted as

$$\begin{aligned} \mathcal {D} = \left\{ \left( \textbf{x}_{1:T}^{(i)}, y_T^{(i)} \right) \right\} _{i=1}^N \end{aligned}$$

(4)

where:

-

\(\textbf{x}_{1:T}^{(i)} = [\textbf{x}_1^{(i)}, \textbf{x}_2^{(i)}, \dots , \textbf{x}_T^{(i)}]\) is the sequential behavioral feature vector of student i, observed over T time steps. Each \(\textbf{x}_t \in \mathbb {R}^d\) represents the student’s interaction data at time t, including variables such as video engagement duration, quiz performance, content completion flags, and interaction frequency.

-

\(y_T^{(i)} \in [0,1]\) denotes the ground-truth normalized proficiency score (e.g., post-test performance), reflecting the student’s mastery level at the end of the learning sequence.

The core objective is to minimize the prediction error between the model’s output \(\hat{y}_T^{(i)}\) and the actual proficiency label \(y_T^{(i)}\), for all students in the training set. This is achieved via a standard Mean Squared Error (MSE) loss:

$$\begin{aligned} \mathcal {L}_{\text {MSE}} = \frac{1}{N} \sum _{i=1}^{N} \left( \hat{y}_T^{(i)} – y_T^{(i)} \right) ^2 \end{aligned}$$

(5)

This loss function ensures that the model captures the mapping from sequential behavioral features to a scalar prediction of learning proficiency, penalizing large deviations from the ground truth.

Diversity-Aware Recommendation Loss

To further enhance the relevance and variety of recommended content, we introduce an auxiliary loss term that encourages recommendation diversity. Given a set of K recommended items \(\{ r_1, r_2, …, r_K \}\) for a particular student, where each \(r_i \in \mathbb {R}^m\) is a feature embedding of a recommended item, we define the diversity loss as:

$$\begin{aligned} \mathcal {L}_{\text {div}} = \lambda \sum _{\begin{array}{c} i,j = 1 \\ i \ne j \end{array}}^{K} \text {cos}\_\text {sim}(r_i, r_j) \end{aligned}$$

(6)

Here:

-

\(\text {cos\_sim}(r_i, r_j) = \frac{r_i^\top r_j}{\Vert r_i\Vert \Vert r_j\Vert }\) is the cosine similarity between two recommended items.

-

\(\lambda> 0\) is a manually tuned weighting coefficient that controls the strength of the diversity regularization.

This term penalizes highly similar items being recommended together, thus promoting semantic or topical variety in learning materials presented to students.

Final Objective and Optimization Strategy

The total loss function used during training is the weighted sum of the predictive and diversity components:

$$\begin{aligned} \mathcal {L}_{\text {total}} = \mathcal {L}_{\text {MSE}} + \mathcal {L}_{\text {div}} \end{aligned}$$

(7)

In practice, \(\lambda\) is initialized between 0.01 and 0.1, depending on the recommendation pool size and the desired trade-off between accuracy and diversity. In future implementations, a dynamic weighting schedule may be adopted where \(\lambda\) decays over training epochs to prioritize accuracy in later stages.

The model is trained end-to-end using the Adam optimizer, with default hyperparameters (\(\beta _1 = 0.9\), \(\beta _2 = 0.999\), learning rate \(\alpha = 0.001\)). We employ early stopping based on the validation loss to prevent overfitting. Training is conducted on a GPU-accelerated server with batch-wise gradient updates and dropout layers for regularization.

Additionally, the dataset is randomly split into 70% training, 15% validation, and 15% test sets. Model performance is evaluated based on RMSE, MAE, and recommendation coverage metrics on the test set.

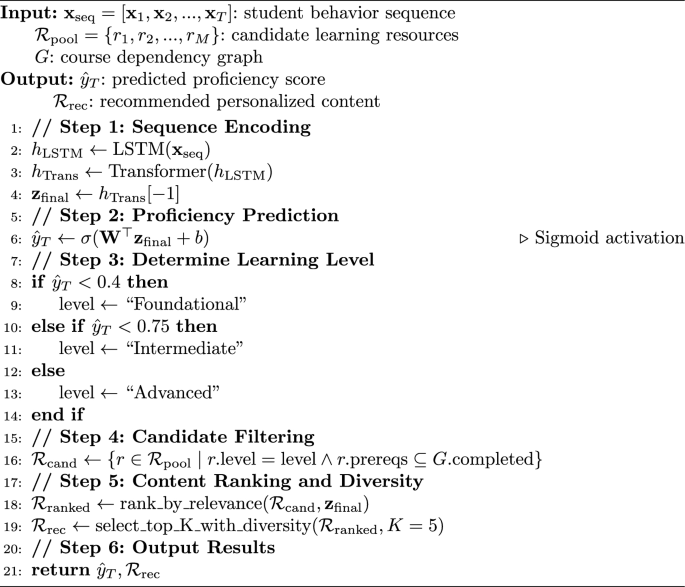

Model inference pseudocode

Once the deep learning model is fully trained and validated, it is deployed to the backend of the mobile music education platform, where it functions as the core engine for real-time personalized instruction. The model operates continuously throughout the learning process, enabling the system to monitor and respond to students’ evolving learning needs in a timely and adaptive manner. This inference mechanism is triggered automatically whenever a student completes a meaningful interaction—such as watching a video, submitting a quiz, or responding to a recommendation—ensuring that the instructional content remains closely aligned with the learner’s current status.

The inference pipeline serves two primary functions:

-

Proficiency Prediction: Based on the student’s historical interaction sequence (including video engagement metrics, quiz performance, content navigation behavior, and system feedback), the model predicts the student’s current learning proficiency. This prediction is expressed as a scalar score

$$\begin{aligned} \hat{y}_T \in [0, 1], \end{aligned}$$

where a higher value indicates greater mastery of the current course material.

-

Personalized Content Recommendation: The predicted proficiency score is then used to determine the most appropriate content level—foundational, intermediate, or advanced. The model filters a global pool of available learning resources to extract those that match the learner’s target level and prerequisite history. Candidate materials are subsequently ranked based on relevance to the learner’s encoded behavior vector. To further enhance engagement, a diversification function is applied to avoid redundant or overly similar recommendations.

This process runs in real time and scales efficiently across hundreds of students. The formal structure of the inference logic is presented in Algorithm 1, which outlines the core procedure for behavior encoding, prediction, filtering, and recommendation.

Adaptive Deep Learning-Based Proficiency Prediction and Personalized Content Recommendation

Curriculum framework design

Building on the controlled experimental design implemented across three comprehensive universities in Southwest China and the technical platform architecture, this study developed a hierarchically structured and modular public music curriculum as the instructional foundation for intervention. The curriculum is specifically tailored for non-music-major students, aiming to accommodate diverse levels of prior musical knowledge, motivational profiles, and cognitive learning styles. It is tightly integrated with the deep learning-based recommendation system to enable differentiated instruction and dynamic content delivery.

The curriculum is divided into three progressive tiers: Foundational, Intermediate, and Advanced. Each tier corresponds to a specific stage in students’ music literacy development and is defined by explicit learning objectives, content types, and assessment strategies.

-

1.

Foundational Tier: Designed for students with limited musical background or low predicted proficiency scores (e.g., \(\hat{y}_T < 0.4\)). It focuses on developing core musical competencies such as rhythm recognition, pitch identification, and basic music theory. Instructional content includes short video lectures, identification exercises, and listening-based quizzes.

-

2.

Intermediate Tier: Aimed at students with moderate proficiency levels (\(0.4 \le \hat{y}_T < 0.75\)), this tier introduces more complex musical structures, stylistic comparisons, and basic tasks in performance or composition. It incorporates interactive exercises and scaffolded assessments to enhance integrative learning.

-

3.

Advanced Tier: Targeted at students with high predicted proficiency (\(\hat{y}_T \ge 0.75\)) or strong engagement, this tier emphasizes critical listening, creative musical expression, and the use of digital tools such as mixing software or notation programs. Personalized challenges and open-ended assessments are used to foster higher-order thinking and sustained engagement.

Each content unit is encoded with standardized metadata tags—such as topic difficulty, prerequisite modules, musical genre, and cognitive skill level (e.g., recall, analysis, creation). These features serve as input parameters for the deep learning recommendation engine, enabling the platform to deliver content aligned not only with student proficiency but also with thematic relevance and learning style preferences.

The curriculum adopts a modular format that supports both sequential progression and flexible, non-linear navigation. This design allows the platform to dynamically adjust content delivery based on real-time learning signals such as quiz results or video completion. For example, a student with strong rhythm skills but weaker pitch recognition may receive targeted content focused on melodic training without redundant review of mastered material.

Assessment mechanisms are embedded across all tiers and include auto-graded quizzes, instructor-reviewed submissions, and reflective self-assessment surveys. These outputs feed directly into the model as learning signals, enabling a continuous feedback loop linking student performance, system prediction, and personalized content adjustment.

Overall, this curriculum framework aligns closely with the system’s technical architecture in terms of content granularity, structural layering, and platform integration. It supports both scalability in large-scale teaching contexts and the delivery of personalized instruction, serving as a critical pedagogical component for implementing AI-driven public music education.

Evaluation metrics and instruments

To assess the effectiveness of the proposed AI-assisted public music education framework, we adopt a combination of quantitative and qualitative evaluation metrics aligned with the two primary research objectives: (1) improving instructional efficiency, and (2) enhancing personalized learning outcomes.

-

1.

Instructional Efficiency Metrics We define instructional efficiency as the improvement in student learning outcomes relative to the resources and time invested. It is evaluated using:

-

Proficiency Gain: Measured by the difference between pre-test and post-test scores, normalized to the interval [0, 1].

-

Completion Rate: Percentage of students who finish all assigned modules within the intervention period.

-

Resource Utilization Efficiency: Ratio of time spent per module to the amount of proficiency improvement.

-

-

2.

Model Performance Metrics To evaluate the deep learning model’s prediction and recommendation performance, we use:

-

Root Mean Squared Error (RMSE): Between predicted and actual proficiency scores.

-

Top-K Recommendation Precision: Proportion of recommended items completed or rated positively by students.

-

Diversity Index: Average pairwise cosine dissimilarity among recommended items, aligned with the diversity loss function.

-

-

3.

Subjective Feedback Instruments Student engagement and perceived usefulness of recommendations are captured via:

-

A standardized satisfaction questionnaire using a 5-point Likert scale, covering recommendation relevance, platform usability, and motivation.

-

Optional open-ended reflection prompts, collected anonymously at semester end.

-

All quantitative scores are logged via the platform backend, while surveys are administered digitally to ensure accessibility and high response rates. This set of metrics provides a holistic view of both system-level efficiency and learner-level impact, forming the basis for comparative analysis across the three experimental groups.

Baseline model comparisons

To validate the necessity of the hybrid LSTM-Transformer architecture, we implemented several baseline models for comparison:

-

1.

Logistic Regression: Simple linear model using aggregated behavioral features

-

2.

Collaborative Filtering: Matrix factorization-based recommendation using user-item interactions

-

3.

Simple Neural Network: Three-layer feedforward network with ReLU activation

-

4.

LSTM-only Model: Single LSTM encoder without Transformer attention mechanism

-

5.

Rule-based System: Deterministic content recommendation based on predefined proficiency thresholds

All baseline models were trained on identical datasets using the same train/validation/test split (70%/15%/15%) to ensure fair comparison. Hyperparameter optimization was conducted using grid search with 5-fold cross-validation for each baseline model.

Ethics approval and consent to participate

This study involved human participants through questionnaire surveys, system usage monitoring, and academic performance assessments, but did not include any invasive procedures or experimental interventions beyond routine educational activities. According to current Chinese academic practice and national regulations, educational practice evaluation research of this nature does not require formal ethics committee approval; therefore, no IRB review was sought. All participants provided informed consent prior to participation, and all research procedures complied with relevant institutional and national guidelines45,46.

Exemption Justification: Based on institutional research ethics policies and Chinese educational research regulations, this study qualifies for ethical approval exemption on the following grounds:

-

1.

Routine Educational Activities: All instructional methods (traditional teaching, MOOC-based blended learning, and AI-enhanced personalized instruction) were implemented as part of normal curriculum delivery within regular coursework, with no experimental procedures beyond standard educational activities.

-

2.

Minimal Risk Assessment: The research posed no additional risk to participants beyond those ordinarily encountered in normal educational settings. Students engaged with learning materials appropriate to their academic level through standard pedagogical approaches.

-

3.

Anonymous Data Collection: All data were collected through routine educational technology systems and academic assessments, with complete anonymization implemented prior to analysis. No personally identifiable information was retained in the research dataset.

-

4.

Standard Educational Evaluation: Data collection involved only academic performance metrics, system usage logs, and anonymous feedback surveys that constitute standard components of educational assessment and course evaluation.

-

5.

Regulatory Compliance: Research activities were conducted in strict accordance with the Personal Information Protection Law of the People’s Republic of China45, the Measures for Science and Technology Ethics Review46, and institutional data protection policies of the participating universities, as well as established guidelines for educational practice research.

Informed Consent: Informed consent was obtained from all participants prior to their inclusion in the study. For participants under the age of 18, informed consent was additionally obtained from their legal guardians.

Regulatory Framework Applicability: This methodology aligns with established practices in educational research and technology evaluation within higher education institutions. Comparative analysis of pedagogical approaches using routine academic data falls under educational practice improvement rather than human subjects experimentation, thereby qualifying for ethical approval exemption under applicable research ethics guidelines.

link